A Comprehensive Guide to A/B Testing

In This Article

It’s just one button, but it means so much—clicking the button is the one thing you need a visitor to do so you can get to know them better and demonstrate how you’ll solve their most pressing problem.

It’s just one subject line, but it’s the difference between opening an email and deleting it.

It’s just one color, but changing it might change how your customers feel about and interact with your brand.

When it comes to marketing and sales, sometimes we focus most of our attention on the big picture, so it’s easy to forget that small changes to landing pages, emails, social media posts, and articles can have a noticeable impact on our desired outcomes.

So, what is A/B testing?

A/B testing—also known as split testing—is about changing one of those small choices, such as a button, subject line, or color, and measuring the outcome for future implementation.

The good news is that you don’t have to be a software engineer, data scientist, or have a complicated technical background to start using A/B testing to refine your marketing strategies. You just need enough curiosity to start asking questions.

This guide will give you a list of 24 elements that you should be A/B testing, tools to help you get started, and pitfalls to avoid.

But First, Here’s How A/B Testing Mitigates Risk

Doing what you’ve always done with your marketing campaigns can be risky. You might unintentionally wander into stagnation and risk losing valuable engagement. But doing what you’ve never done before is also risky because the changes might feel ambiguous and the projected outcomes might be hard to predict.

A/B tests mitigate risk by giving you the chance to measure if you’re missing opportunities or if your current approach is the best strategy. You can run small consistent A/B tests throughout big changes and be more confident you’ll reach your intended goals.

Getting Started With A/B Testing

Even though you see the value in this work, getting started might feel like a big task. Here is a step-by-step process to launch your A/B testing strategy.

Step 1: What’s The Purpose?

The only way you know what works and what doesn’t is if you know exactly what you’re trying to achieve with your testing. If you find yourself thinking, “Well, I send emails, post on social media, and maintain a website because that’s what everyone else does, so I should too,” then ask yourself why you haven’t eliminated that platform, and you’ll find the purpose.

For example, you can’t delete your website because that’s where people purchase your products and services. You can’t delete email because it drives people to the website where customers make purchases, and the same goes for social media.

You might also discover that certain platforms don’t have a purpose. For example, maybe you have a Facebook page because that was the first social platform your company created. However, it’s consistently a low-performing channel. You realize you don’t care about increasing followers because engagement is always sparse—not to mention you haven’t had a good lead from it in months…or years.

These overarching purposes can also be reviewed on a granular level. Each page and every campaign should have a purpose.

If the purpose of your website is to make sales or capture leads, you want to see how many changes lead to an increase in those outcomes. If the purpose of LinkedIn is to push people to your website, then you’ll see what changes increase website traffic.

Step 2: Make a Hypothesis

Here’s an A/B testing example: Suppose the purpose of the website homepage is to capture qualified leads by increasing the number of people who sign up for a free trial.

Currently, your website has three key elements urging people to sign up.

- A headline that says, “Start Your Free Trial Today”

- Copy describing how long the free trial will last and what’s included

- A blue button that reads, “Sign Up”

What if the headline instead says, “Start Your Free Trial Now”? What if the button was yellow instead of blue? What if the copy was updated stating that people don’t need a credit card to start the trial?

These can all be separate A/B tests. Maybe your hypothesis is as simple as, “a yellow button leads to more conversions than a blue button.” Test it and measure performance. Did the conversions increase, decrease, or stay the same after you made the change?

Step 3: Prioritize Your Testing Schedule

There are dozens of elements you could test on a single page, and even more elements you can test on email and social media. Even on the biggest teams, there aren’t enough resources or time to test everything. Choose which pages or strategies have the biggest impact on your business to focus your attention on first.

Step 4: Test and Report

Once you lay the groundwork, getting started is as simple as just getting started. Test different elements and report your results to stakeholders. Some teams might struggle because they’ve completed high-value A/B tests, but the results aren’t implemented.

For example, you might discover that subject lines that ask a question have a higher open rate on email than other subject-line types. But, if there is one team conducting A/B tests and another writing emails, the internal communication must be strong for everyone to understand what’s working and what isn’t.

Start With Subtraction

It’s not always about adding or changing elements. Sometimes the best results can come from subtracting elements from a page or post. It’s also an easy way to get started because you don’t need to create anything new to test.

Simply delete some copy, shorten a headline, or remove a section, and see how your audience responds. This strategy might work, as eliminating distractions can reduce decision fatigue and increase conversions.

Additional Elements to A/B Test on Email, Social Media, and Websites

Email Elements to A/B Test

Most email software will offer some A/B testing tools built into the platform. The winner is typically determined by open rate, click-through rate, or some type of conversion. Often, you can either have the email provider select the winner after a certain amount of time or you can manually identify the winner.

Layout

Most email distribution software will offer templates. You can have multiple images or just one. You can change colors or where the call-to-action (CTA) buttons appear in the copy. Experiment with all the possibilities to determine if there is a specific layout that converts.

HTML vs. Plain Text

Emails designed with HTML have all the familiar elements of a promotional email: images, buttons, backgrounds, color, etc. (i.e., elements of the layout you might be A/B testing). Plain text emails don’t have these pretty elements.

So why would you test these two approaches?

HTML emails are best-known for their use in marketing, so email providers like Google have automatically tagged these emails as promotional in people’s inboxes. People aren’t rushing to read the emails in this tab, so if you’re lumped in here, improving open and click-through rates won’t be easy. Plain text emails can sometimes slip past the promotions folder and land your message in an easier-to-spot location.

While plain text emails appear more personal, it’s harder to make the CTA stand out, so you’ll have to measure the open rate to click-through rate to measure if the emails are achieving their intended goals.

Length

What if your email was only four sentences long? What if it was four paragraphs long? Experiment with body copy to determine if your audience finds a comprehensive approach or brevity more compelling.

CTAs and Links

Your CTA will usually land at the top or bottom of the email, and you can test a variety of locations to determine which position results in the most clicks. You can measure having just one link in an email compared to having several.

Subject Line

Subject lines are the key to increasing how many people open your emails. Testing this element can help you refine what stands out in people’s inboxes and earns clicks. A/B testing this element often means you’ll send a certain percentage of the list one subject line and another percentage the other. When you discover a winner, you can send the winning subject line to the remaining list. After that, continue to test it again… and then test it again against different approaches until you know what works best.

Here are some subject line variations to experiment with:

- Highlight a promotion – Example: 20% Off For New Members

- Use personalization – Example: 20% Just For You, [Name]

- Ask a question – Example: Do you want to save BIG on your first purchase?

- Create urgency – Example: Save 20% (Offer Ends at Midnight)

- Include an emoji – Example: Save 20% on Your First Purchase

- Use Numbers – Example: 5 Ways to Save on Your Next Purchase at [Company]

Preview Text

With the right approach, preview text in a marketing email helps support the subject line and improve open rates. This is a brief description that often shows up as a preview next to the subject line. Don’t forget about this often forgotten yet powerful element of email marketing! Mix and match the subject line variations with the preview text to see what works well together.

For example:

Subject line: Do you want to save BIG on your first purchase?

Preview Text: Save 20% (Offer Ends at Midnight)

Or provide additional context:

Subject line: You’re Invited to an Exclusive Networking Event

Preview Text: October 30 at 12 p.m. EST

Just like subject lines, you can test preview text by splitting your list in half or by a certain percentage and experimenting with different approaches. Typically, the email that receives the most opens and click-through rates will win the A/B Test.

Social Elements to A/B Test

There are often two types of overall A/B testing on social media. The first type is testing ad performance and the second is testing the performance of posts competing for organic reach or impressions.

If you’re testing ad performance, there are A/B testing tools offered on social platforms. For example, Meta’s Ads Manager platform has an experiment tool that lets you duplicate ads and test different variables, such as captions, photos, headline text, etc. LinkedIn, Twitter, Pinterest, Snapchat and TikTok all have similar features.

Testing ads is straightforward because you duplicate content and change one variable. Testing content that competes for organic views is more complicated because you can’t simply share the same post twice and change one variable. If only it were that easy! The best strategy is to keep as many variables the same as possible. For example, spend one week posting content at the same time every day; then change the posting time the following week.

Regardless of what type of content you’re testing, there are some standard elements that you can A/B test for social media.

Captions

We all have assumptions about which captions will have the most impact. We might think our audience wants short and snappy captions, but in reality, a post with a long caption on a platform like LinkedIn might outperform shorter captions. You’ll only know by experimenting with your approach.

Besides length, some of the elements you can change in your text captions are tone, emojis, tagged accounts, and numbered lists.

Headlines

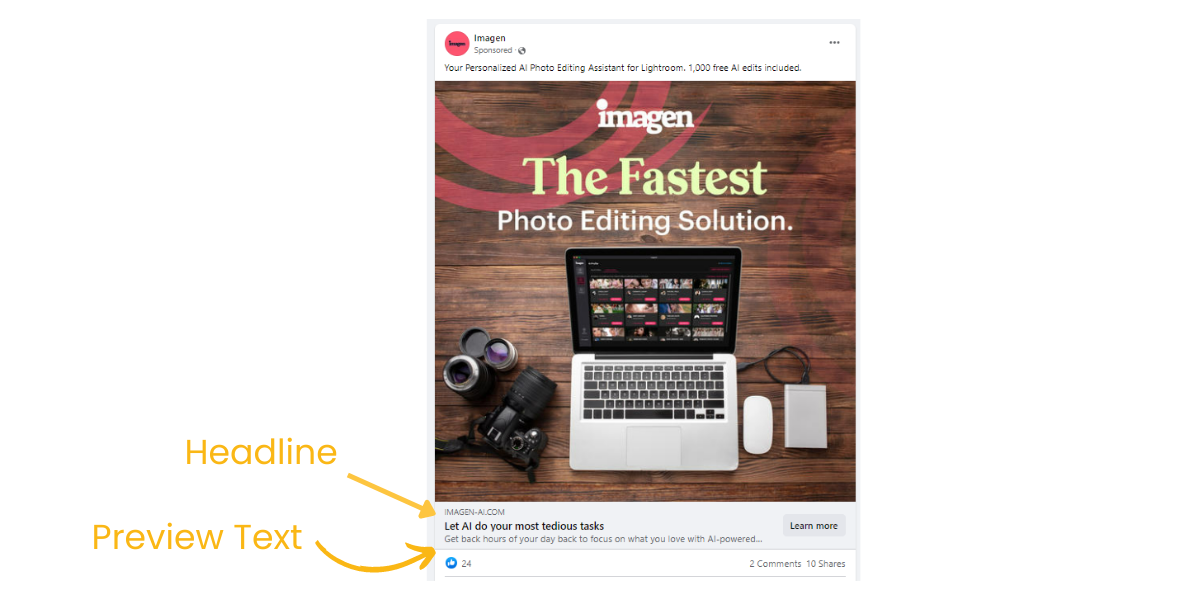

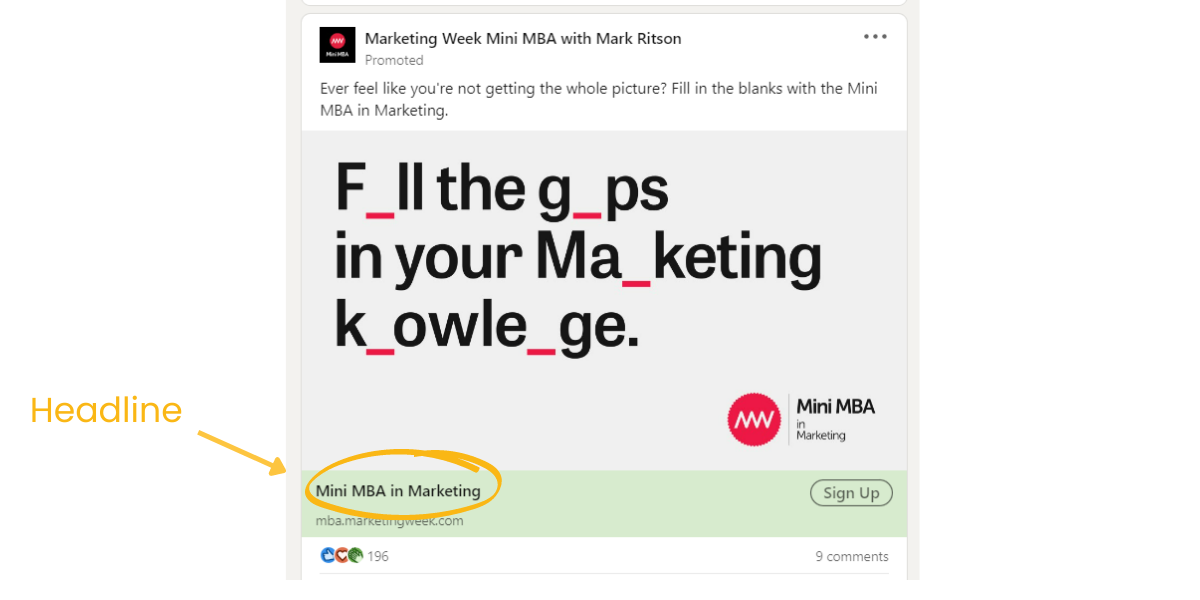

Similar to email, headlines are like a subject to your post and are sometimes followed by preview text that can be used to provide additional context. You can change the headline and preview text to see if it results in more clicks. For example, check out the images below.

Media Type

The media types most common on social media platforms include links, images, graphics, or videos. However, platforms are constantly introducing new features.

Instagram launched Stories in 2017 and Reels in 2020. Facebook adopted the same features after their premier on Instagram. LinkedIn launched Newsletters for business pages earlier this year (2022). Both LinkedIn and Twitter are experimenting with live audio features. Media types also include carousel ads, posts, polls, and memes.

If your typical strategy includes common elements, experiment with new features or features you haven’t used before to see if your organic reach, engagement, or paid reach improve.

CTA Buttons

Rather than getting to craft unique buttons like you can on a website, social platforms usually have a select few button types.

On Meta Business Suite, you can choose from several options like apply now, book now, order now, shop now, install now, call now, etc. On LinkedIn, you can choose from sign up, contact us now, register now, learn more, or visit website.

You can A/B which CTA results in more conversions.

Time of Day

Organic reach can be affected by what time you post your content. Several platforms might offer insights on when your audience is most active, but it still helps to test if morning, afternoon, or evening posts perform better.

Content Types

You likely have several different types of content you post to social platforms, including testimonials, promotions, behind-the-scenes, resources, etc. Experiment with the different types of posts to see what boosts reach and engagement.

A mixture of all these content types helps keep your page interesting. Even if one type outperforms the others, it just means you might do three behind-the-scenes posts a week instead of one.

Hashtags

Hashtags play an important role in discoverability for many platforms, including Instagram, Twitter, LinkedIn, and Pinterest. Start with building a bank of hashtags. Then, A/B test which hashtags and how many hashtags impact performance.

Target Audience

Choosing a target audience is typically reserved for social media ads. You can A/B test this feature by duplicating ads and sending the same ad to separate segments to measure performance. You’ll learn which audiences are most likely to convert on different platforms and optimize your advertising dollars.

Profile Elements

Beyond posts and ads, you can also experiment with profile elements like bios and profile pictures. It’s possible that changes to these elements could lead to more followers or more website traffic.

For example, bios on social profiles are a couple of short sentences explaining your company. More often than not, these are created when the profile was launched, and haven’t been changed since.

It’s worth A/B testing different approaches and measuring if they affect conversions, website traffic, or follower count.

Website Elements to A/B Test

There are a limited number of outcomes you can measure when you’re A/B testing email and social media, such as clicks, follows, open rate, engagement, etc. That is not the case with A/B testing on websites.

You can use dozens of metrics to A/B test the performance of different metrics, including:

- Single Page views

- Time on page

- Pages viewed in a session

- Bounce rate

- Form completion

- Purchases

- Conversion rate

- Button clicks

- Scroll depth

- Links clicked

Since there is so much data available on websites, you have to determine when information is valuable and when it’s just noise.

If the most valuable action a visitor takes on your website is filling out a contact form, then conversion rate or form completion may be the top priority when you’re A/B testing elements. That being said, it’s important to review page views. A high bounce rate may be a red flag that you need to optimize your page to ultimately increase your form conversions.

Here are some of the elements you should A/B test on your website:

Buttons

Buttons are how we direct people to other places on the website that are more relevant to them. They’re also the element that people click to sign up for newsletters, make purchases, and download content. Experiment with the button copy “Sign up” versus “Join now,” etc. You can also experiment with the button’s size, color, and position.

CTA

Your call to action often includes a button, so you should A/B test copy, size, color and position. But the CTA can also include copy,images, and page location. Experiment with all the elements to see if it improves conversion. One of the most powerful ways to A/B test a CTA is by changing its position on the page. A CTA near the top before a visitor has to scroll might have a higher conversion rate.

Pop-Up Offers

Done well, pop-up offers can turn visitors into customers. But done poorly, they can be a frustrating distraction. You can experiment with pop-up offers on specific pages where you know that visitors might be interested in making a purchase but just aren’t ready. The offer can be a promotional price, a valuable free offer (like a download), or a way to create urgency and encourage visitors to make their decision by letting them know about an approaching deadline (like the start of a new course).

The key is to make sure that the pop-up is relevant to the page but also easy to close. You can also experiment with its position on the page. Some pop-ups dim the whole page and put the pop-up in the center. Others are less intrusive and take up space at the bottom of the screen or appear on the left or right side of the page.

Images and Video

When someone lands on your homepage (or any page), there’s usually an image or video of some type at the top of the page accompanied by the copy. Experiment with this element by using different types of images. You can also measure how video performs in comparison to static images or graphics. Finally, determine if there is a certain number of images throughout the page that may impact performance.

Videos are often better at describing products and demonstrating how to use them. However, some videos don’t lead to conversions, or worse, they slow down the website’s page speed.

Headlines

Just like email and social media, you can experiment with the headlines on your website to see if it drives more traffic or results in more conversions. Test page headlines that contain numbers versus one that doesn’t. You can also test a headline’s urgency, tone, and length.

Navigation

Menu navigation at the top of the page helps visitors find information more quickly. Unfortunately, sometimes this feature can become bogged down with dozens of options that ultimately lead to decision fatigue and confusion. Test a simple navigation menu to see if it results in a better user experience. You can also test a navigation bar that drops down or one that opens on the left or right side of the page instead of at the top.

Page Length

A short page might have one call to action with no scrolling required to preview all the content. It could be a simple task asking first-time visitors to complete a form or join a mailing list. A longer page might have several thousand words with testimonials, product or service descriptions, and pricing information.

Depending on the context and buyer preferences, one approach might perform better than others. Most websites will have a mix of short and long pages, so take time to experiment on the pages that could have the biggest impact on revenue.

A Note on Blogs

Blogs or articles support a constant stream of new and interesting pages. For search engine optimization (SEO) purposes, blogs between 1,500 and 2,500 words tend to perform better. But that might not be the case for your company, so experimenting with word count and page length could help you understand what is most compelling for your audience.

When you are A/B testing blogs or articles, measure how many people sign up for email, stay on the page, how long they stay on the page, or what content is driving traffic to the website. Most importantly, analyzing the same metric consistently will help you compare your strategies over time.

Personalization

You can add personalization to pop-ups and other website elements. However, these can make people uncomfortable, so you’ll really need to know your audience well before starting this experiment. Personalization also requires advanced data collection methods and could create privacy concerns depending on the method.

Personalization might be something like, “Hi, [Name], how can we help you?” on a pop-up in the bottom left hand of the screen. You could also include personalization when a visitor is securely logged into your website to shop. This can often alleviate any privacy concerns and instead, add a touch of brand personality and connection.

Pricing Copy

The page where you list all the prices for your products or services is arguably one of the most important pages to test and understand what results in increased revenue. There are entire fields of study dedicated to understanding consumer behavior and why people buy.

Below are the pricing page elements you should A/B test.

- The number of options: Buyers faced with too many options might experience decision fatigue and decide to think about their purchase rather than act on it.

- Pricing high versus pricing low: Sometimes low prices might reduce purchases because buyers think the product or service is low quality.

- Feature lists: Should you show everything the buyer will receive with their purchase or just the most important features?

- Font size: Big prices (even if they’re low) might translate in the buyer’s mind as expensive.

- Product order: If you have a list of products or services (or tiers), experiment with the order to see if it changes conversion rates or affects which option the average buyer purchases.

- Page copy: The best sales copy emphasizes outcomes over features—for example, “build the workflow you want” vs. “customizable roadmap.”

Alternative Approaches

Some companies, such as clothing retailers, have hundreds or thousands of products. In this case, there isn’t one pricing page, but it’s still helpful to A/B test pricing elements.

For example, you can test the font size or color of the prize, showcasing different product images and image sizes, or even the product descriptions or layout.In this scenario, you might try two different approaches on similar products to see which one performs better.

Test and Test Again To Optimize Your Marketing Strategies

The winner of an A/B test won’t necessarily always be the best strategy forever. Continually test elements to find what’s working and what isn’t, even if you tested it in the past. Additionally, don’t assume that just because something worked on one page or in one context that it’s the right strategy for all of your pages and platforms.

“B” Won’t Always Win (and That’s Okay)

Not every experiment will result in a change on your website, email strategy, or social posts. Sometimes, it doesn’t matter if the button is yellow or blue, the click-through rate stays the same. Occasionally, your original copy is the best option compared to the alternative versions.

These outcomes don’t mean your A/B test failed because you still captured valuable insights—it just wasn’t what you were expecting. After all, if you already knew the answer, you wouldn’t need to test it.

A/B Testing Pitfalls

You might have a process in place to launch an A/B testing strategy, but there are a few pitfalls to avoid when you’re starting this type of work.

Testing Without Traffic

If you have a new website or a small mailing list, your results will always be based on a small sample size. You don’t want to create a long-term strategy or make expensive decisions based on limited input.

If you’re just getting started, sometimes the best approach is to build the content and traffic first based on marketing best practices and suggested testing for your specific platform and goals. You can then continue to test specific elements after you’re more established.

So, how many followers, subscribers, or visitors is enough? For email, you’ll need about 1,000 subscribers for statistically significant results. Websites should have at least 1,000 visitors per week. You can use this calculator to help identify how long you should run your test.

For advertising on social media, you choose your target audience, so you can start A/B testing on these platforms even before you have an established follower base.

Losing the Brand

Just because something is performing better doesn’t mean it’s the best choice. It might be the wrong tone for your brand or the wrong aesthetic. As a result of going off-brand, you might lose traction with your target audience or reduce overall awareness.

Testing Too Many Elements

A/B testing consists of measuring two variations of a single variable, such as a headline, copy, or button. It’s not about making two very different emails, social posts, or web pages and seeing which performs better. At some point, you can’t truly know what affects the results. Was it the shorter headline, the copy, or the button?

A Note on Multivariate Testing vs. Iterative Testing

While it is a pitfall to test too many variables for an A/B test, there are multivariate tests that allow you to measure a combination of elements to determine which elements work best.

Meta Business Suite has this feature for ads on platforms like Instagram and Facebook. To use dynamic ads, you’ll create several headlines, upload multiple images, and make caption variations. The platform will create several versions of the ad using those elements and determine which combination yields the best results.

These same tests are more complicated to execute on websites and require a larger audience to determine the winner.

Multivariate testing—A/B/C testing—can help you determine how different elements interact together to create better outcomes. Iterative testing is conducting a sequence of single-variable tests on each element to optimize them one at a time.

How Metric Marketing Can Help Your Company

A/B testing can be time-consuming, so let us help! At Metric Marketing, our staff of expert marketers can design an A/B testing strategy and present the results to refine your overall marketing approach to grow your business.

Don’t sweat the legwork—that’s what we’re here for. Not only do we develop winning marketing strategies, but we also give our clients a comprehensive playbook. And, our ongoing monthly services include a consistent effort in perfecting your strategy, monitoring performance and analytics, and so much more.

Give us a call at (734) 404-8714 or fill out our online contact form today. We look forward to hearing from you!

Must-read articles

Looking for something else?

There's so much more

Ready to Inquire?